President Donald Trump on Friday ordered the entire federal government to stop using products from the artificial intelligence company Anthropic to prevent what he called a “woke, radical left-wing company” from encroaching on the military’s decision-making.

The public dispute between the Pentagon and Anthropic that resulted in the company’s blacklisting has effectively become an indicator of the broader battle over the future governance of AI.

Coverage has focused on Anthropic’s refusal to budget for its two “red lines” (using its product in mass domestic surveillance or to power fully autonomous weapons) and whether Defense Secretary Pete Hegseth’s Pentagon can be trusted to use powerful software with a looser requirement to use it only “legally,” as the administration demands.

But according to reports this week, the confrontation that sparked the dispute actually focused on a different but related issue: how AI could be used in the event of a nuclear attack on the United States.

Semafor and the Washington Post reported that in early December, Undersecretary of Defense for Research and Engineering Emil Michael asked Anthropic’s Dario Amodei whether, in a scenario in which nuclear missiles flew toward the U.S., the company would “refuse to help his country because of Anthropic’s ban on using its technology in conjunction with autonomous weapons.” Administration sources say Michael became enraged when Amodei said the Pentagon should contact Anthropic and consult with him. Anthropic denies the story and says it was willing to create an exception for missile defense, but either way, the conversation poisoned relations between the two institutions. (Disclosure: Vox’s Future Perfect is funded in part by the BEMC Foundation, whose primary funder was also an early investor in Anthropic; they have no editorial input on our content.)

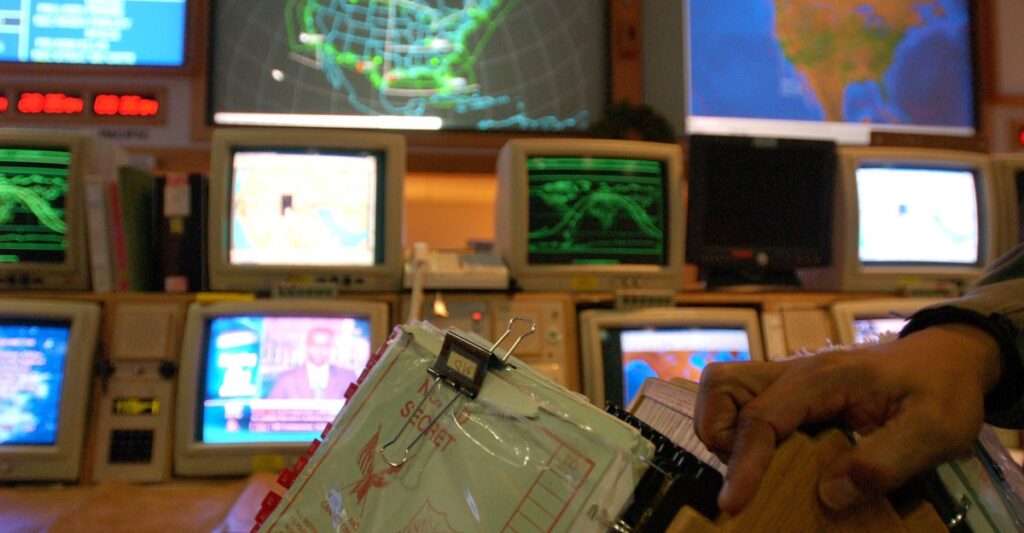

As I reported for Vox in November, there is an active and ongoing debate about whether and how artificial intelligence should be integrated into nuclear command and control systems. We don’t know to what extent it already is, but we do know that the US military is actively looking for ways to use AI and machine learning “to enable and accelerate human decision-making.”

Discussions about nuclear weapons and AI tend to focus on whether machines would ever be given control of the ability to launch nuclear weapons, and the imperative of keeping a “human informed” in discussions about the use of humanity’s deadly weapons. But many experts and officials say the debate is the low hanging fruit: Neither the United States nor any other country will likely ever hand the decision on whether to order a nuclear strike to AI.

A much more complicated question is the extent to which AI should be relied on for functions such as “strategic warning” – synthesizing the enormous amount of data collected by satellites, radars and other sensor systems to detect potential threats as early as possible.

This is the kind of hypothetical use case that it sounds like Michael was proposing to Amodei. If the system is only used to give us a better opportunity to knocking down an incoming missile, might seem like a no-brainer.

But in a scenario in which the United States was under attack with ballistic missiles, the president would immediately face a decision (which would have to be made in just a few minutes) about whether to retaliate, which could trigger a full-blown nuclear war.

The lives of millions of people could depend on the system working correctly, and there are many examples in the history of nuclear weapons of detection systems leading to near misses that were only prevented by human intuition.

The technology to perform that type of threat detection likely doesn’t exist yet, which, given what’s at stake, may have been one of the reasons Amodei was reluctant to commit to this scenario.

Retired Lieutenant Gene. Jack Shanahan, who flew nuclear missions in the Air Force and later served as head of the Pentagon’s Joint Artificial Intelligence Center, told Vox that if detection and response to nuclear threats were turned over to AI agents, “I don’t want to say it’s certain there’s going to be a catastrophe, but I think we’re headed down that path.”

He pointed to a widely reported study published this week by a researcher at King’s College London that found that AI models, including Claude, ChatGPT and Google Gemini, were much more likely than human participants to recommend nuclear options in simulated war games. In this scenario, an AI might not be launching a weapon, but a president would have to override the prescription of a multibillion-dollar system that sounds panicked under extreme pressure.

One factor that differentiates military use of AI from previous technologies with obvious national security uses is that, in this case, much of the cutting-edge research was conducted by private companies that initially had an eye on the commercial market, rather than companies responding to military demand. (An example of the latter case would be the Internet, which evolved from the Department of Defense and academic projects long before companies found commercial uses for it.)

The new dynamic is sure to lead to culture clashes, particularly between a company like Anthropic, which, while it has so far been happy to allow its product to be used by the Pentagon, has built its public image around its concerns about AI security, and Pete Hegseth’s “anti-woke” Pentagon.

“Boeing would never object to building something the government asked them to build,” said Shanahan, who led the Pentagon’s controversial 2018 partnership with Google, Project Maven, a previous culture clash between DC and Silicon Valley. “It is a defense industrial base company. [AI is] Being born into a very different world with a group of people who don’t see things the way Lockheed employees might have seen the Cold War. “It’s Mars-Venus to a certain extent.”

How the clash plays out and whether other companies are willing to allow their models to be deployed with fewer questions asked can go a long way toward determining what role AI could play in a hypothetical nuclear war.

This story was produced in partnership with the Outrider Foundation and Journalism Funding Partners.